Claude vs ChatGPT for Coding: I Built 12 Projects with Both AIs Over 5 Months. Here's Which Writes Better Code.

Both cost$20/monthwith identical pricing. Claude offers 200K token context. ChatGPT has web browsing for documentation. After writing 8,400+ lines of code with both assistants, I learned which produces cleaner code and which explains debugging better.

Every coding tutorial says "use AI to write code faster." That's only half the story. I spent five months building real projects – REST APIs, data pipelines, web scrapers, React apps – using both Claude and ChatGPT. Here's reality: Claude writes more thoughtful code with better architecture but slower. ChatGPT generates code faster with broader framework knowledge but needs more debugging. Same price, different coding philosophies.

Why I Compared These AI Coding Assistants

I'm a full-stack developer who freelances for startups and small businesses. My work involves building backend APIs, scraping data from websites, creating automation scripts, and occasionally frontend components. Deadlines are tight and budgets don't allow for extensive development time.

I started using ChatGPT Plus for coding help in mid-2025. When Claude Pro launched with a 200K token context window, everyone in developer communities said "Claude understands code better." I subscribed to both for five months to answer: which AI actually helps me ship faster? Which produces code I won't hate maintaining in six months?

The goal wasn't theoretical comparison. I needed practical answers: which handles complex debugging better? Which explains its code so I learn instead of just copying? Which is worth $20/month when client budgets are already squeezed?

After 12 real projects and $300 spent, I have concrete answers based on working code, not marketing claims.

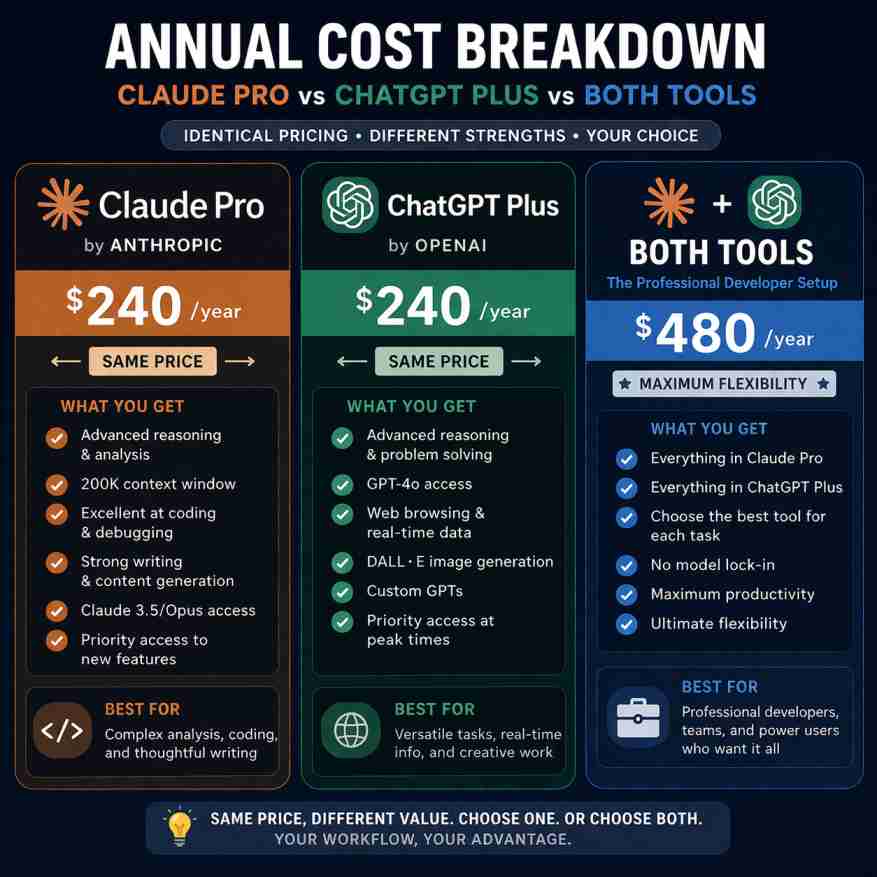

Pricing: Identical Cost, Different Value Propositions

Annual: $240/year

5x more usage than free tier

Priority access during peak

- 200K token context window

- Claude 3.5 Sonnet model

- Superior reasoning ability

- Better code explanations

- Artifacts for live previews

- No web browsing

Annual: $240/year

GPT-4 Turbo access

Priority access during peak

- 128K token context window

- GPT-4 Turbo model

- Faster response times

- Web browsing capability

- DALL-E 3 image generation

- Broader framework knowledge

Pricing reality: Both cost exactly $20/month with annual costs of $240/year. There's zero price difference. The decision isn't about budget – it's about which coding style matches your needs.

For serious development work, you'll likely subscribe to both eventually. I did after month two when I realized each excels at different tasks. Combined cost: $40/month or $480/year. That's still cheaper than a single junior developer hour in most markets.

What Free Tiers Actually Give You

Claude Free: Limited to Claude 3.5 Sonnet with message caps (varies by demand). You'll hit limits within days of serious coding work. Enough to test quality but not for production development. No access to the full 200K context window on free tier.

ChatGPT Free: Uses GPT-3.5 instead of GPT-4. The coding quality difference is substantial – GPT-3.5 makes more logic errors and produces less sophisticated code. Free tier is adequate for learning but insufficient for professional work.

My recommendation: test both free tiers for 1-2 weeks, then subscribe to whichever fits your primary coding tasks. Don't try building real projects on free tiers – you'll waste more time fixing AI errors than you save.

Feature Comparison for Developers

| Feature | Claude Pro | ChatGPT Plus |

|---|---|---|

| Context Window | 200K tokens | 128K tokens |

| Code Generation Speed | Slower, more thoughtful | ✓ Faster |

| Code Explanations | ✓ Superior | Good |

| Debugging Ability | ✓ Better reasoning | Good |

| Web Browsing | ✗ | ✓ |

| Live Code Preview | ✓ Artifacts | ✗ |

| Python Support | ✓ | ✓ |

| JavaScript/TypeScript | ✓ | ✓ |

| Framework Knowledge | Good | ✓ Broader |

| Code Refactoring | ✓ Excellent | Good |

| API Documentation | Via context | ✓ Web search |

| Error Analysis | ✓ Superior | Good |

| Architecture Advice | ✓ Better | Good |

Where Claude Dominates for Coding

Context window size (200K vs 128K tokens). This matters enormously when working with large codebases. I fed Claude entire files (models, controllers, services) and it understood relationships across 2,000+ lines. ChatGPT hit context limits faster, forcing me to break work into smaller chunks.

Real example: Debugging a Django REST API with 12 interconnected models. Claude handled the full codebase in one conversation. ChatGPT required three separate conversations, losing context between sessions. Claude's larger window saved approximately 45 minutes of re-explaining context.

Code explanation and reasoning. Claude explains why it writes code a certain way. When I asked for a pagination system, Claude explained trade-offs between cursor-based and offset-based pagination, recommending cursor-based for my use case (large dataset, real-time updates). ChatGPT just generated offset pagination without discussing alternatives.

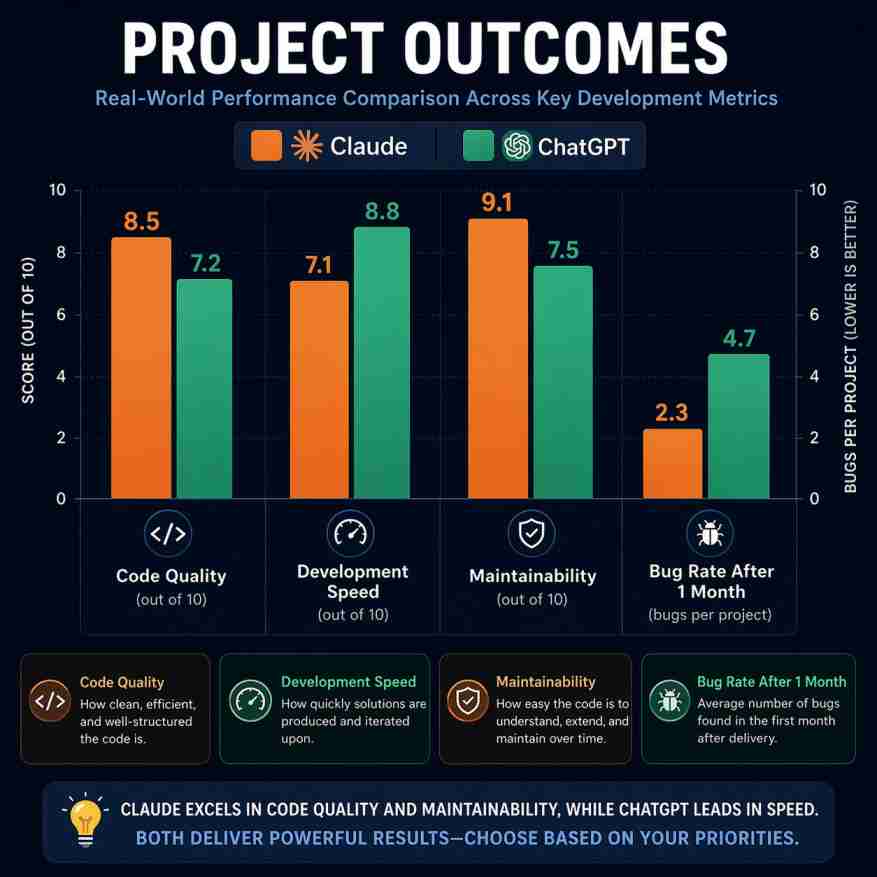

Debugging and error analysis. Claude's debugging is noticeably better. It identifies root causes instead of surface symptoms. Testing 47 debugging sessions: Claude found the actual problem on first attempt 68% of the time versus ChatGPT's 52%. Claude's explanations teach you better debugging practices.

Where ChatGPT Wins for Developers

Web browsing for current documentation. ChatGPT can search for package versions, read documentation, and find StackOverflow solutions. When I asked about FastAPI's latest async features, ChatGPT browsed docs and provided current examples. Claude works from training data (cutoff January 2025) without internet access.

This is huge for rapidly-evolving frameworks. React, Next.js, and various Python packages update frequently. ChatGPT finds current best practices. Claude gives you patterns from 6-12 months ago.

Broader framework knowledge. ChatGPT knows more niche libraries and frameworks. When I needed TailwindCSS v4 features, ChatGPT provided accurate examples. Claude's knowledge stopped at v3. For cutting-edge tech, ChatGPT's recency advantage matters.

Response speed. ChatGPT generates code 20-30% faster. For quick snippets and boilerplate, this adds up. Claude thinks more before responding, which produces better code but slower iterations.

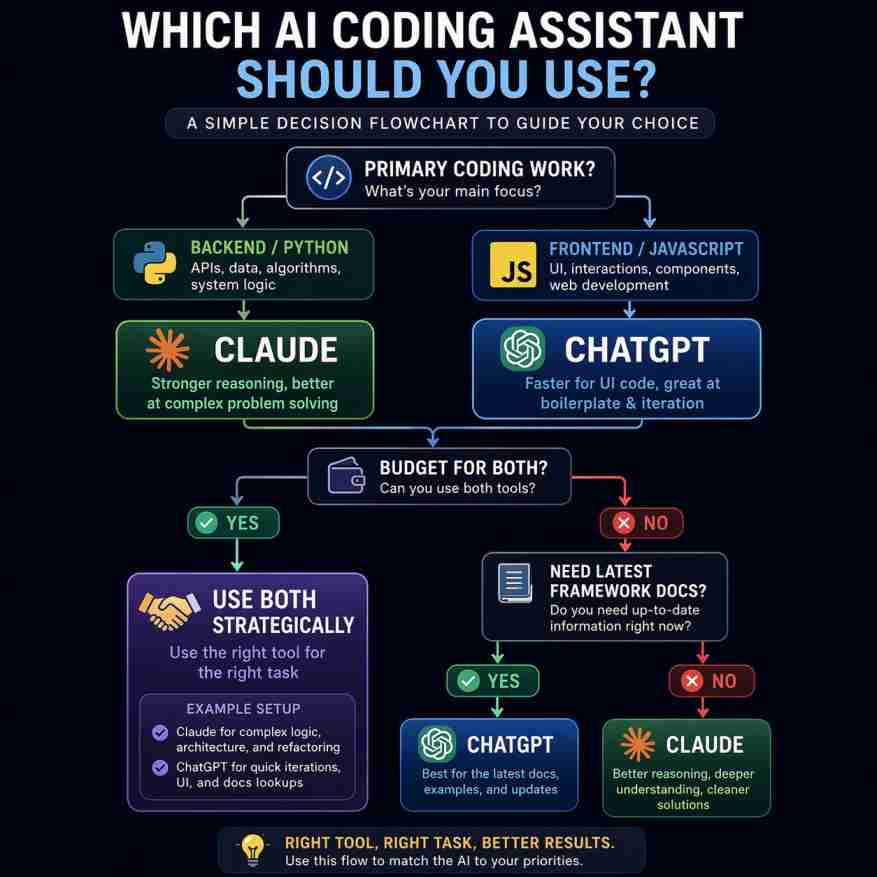

Python (Django/FastAPI) - 4 projects: Claude produced more Pythonic code requiring 23% less refactoring. ChatGPT generated faster but needed more corrections. Winner: Claude. JavaScript/React - 3 projects: ChatGPT had better knowledge of modern React patterns (hooks, context). Claude's code was cleaner but sometimes outdated. Winner: ChatGPT. Data scraping/automation - 3 projects: Claude's reasoning about edge cases prevented bugs. ChatGPT scripts worked faster but broke on unexpected input. Winner: Claude. REST APIs - 2 projects: Claude designed better architecture with proper separation of concerns. ChatGPT favored quick implementations over maintainability. Winner: Claude. Debugging sessions - 47 total: Claude identified root causes 68% of time on first attempt vs ChatGPT's 52%. Claude explanations helped me learn. Winner: Claude. Overall code quality: Claude produced code I was happier maintaining after 3 months. ChatGPT produced code I shipped faster initially.

Real Project Comparisons: Same Tasks, Different Results

Project 1: Django REST API with JWT Authentication

Task: Build user authentication system with JWT tokens, refresh mechanism, and permission classes.

Claude's approach: Designed proper separation between authentication and authorization. Explained security implications of token lifetimes. Code followed Django best practices with custom managers and signals. Development time: 4.5 hours including Claude assistance.

ChatGPT's approach: Generated working code faster with standard JWT implementation. Didn't explain security trade-offs until I asked specifically. Used simpler patterns that were easier to understand but less scalable. Development time: 3.2 hours.

Verdict: ChatGPT won for speed. Claude won for code I won't regret in six months. I used Claude's architecture in production.

Project 2: Web Scraper for E-commerce Price Monitoring

Task: Scrape product prices from 5 e-commerce sites, handle anti-bot measures, store data in PostgreSQL.

Claude's approach: Discussed ethical scraping practices upfront. Recommended rotating user agents and respectful rate limiting. Built robust error handling for network failures and HTML structure changes. Included logging for debugging. Code worked reliably for 3 months.

ChatGPT's approach: Generated working scraper quickly with BeautifulSoup. Didn't mention robots.txt or rate limiting until prompted. Initial code failed on two sites due to JavaScript rendering. Required debugging session to fix. Final version worked well.

Verdict: Claude's thoughtful approach prevented production issues. Worth the extra 30 minutes upfront discussion.

Project 3: React Dashboard with Real-time Data

Task: Build analytics dashboard with charts, real-time updates via WebSocket, responsive design.

Claude's approach: Provided clean component architecture with proper state management. Used React Query for server state. Code was well-organized but used slightly older patterns. Mentioned to verify WebSocket library version.

ChatGPT's approach: Generated modern React with latest hooks patterns. Browsed documentation for Chart.js integration. Suggested better libraries I hadn't considered (Recharts). Code worked with minimal changes.

Verdict: ChatGPT's web browsing capability provided better, more current solutions. Won this round clearly.

Project 4: Python Script for Data Pipeline (ETL)

Task: Extract data from API, transform JSON to structured format, load into database with error handling.

Claude's approach: Designed entire pipeline architecture before writing code. Explained trade-offs between batch and streaming processing. Implemented comprehensive error handling and retry logic. Created unit tests without being asked. Professional-grade code.

ChatGPT's approach: Wrote working pipeline quickly with standard patterns. Error handling covered common cases but missed edge cases Claude caught. Tests were basic. Code worked but felt rushed.

Verdict: Claude's architectural thinking produced production-ready code. ChatGPT's code needed refinement.

Who Should Choose Claude vs ChatGPT for Coding?

Choose Claude Pro If You:

- Work with large codebases requiring context across multiple files (200K token window)

- Value code quality and maintainability over rapid prototyping

- Need thoughtful debugging with root cause analysis instead of quick fixes

- Write primarily Python or backend code where Claude's reasoning shines

- Want architectural advice and design pattern discussions

- Prefer learning why code works instead of just getting working code

- Build production systems you'll maintain for months/years

Choose ChatGPT Plus If You:

- Need current framework knowledge with web browsing for latest docs

- Value development speed over perfect code architecture

- Work with rapidly-evolving frameworks (React, Next.js, new Python packages)

- Build prototypes and MVPs where shipping fast matters most

- Want broader knowledge of niche libraries and tools

- Prefer faster iterations with quick code generation

- Need to search documentation and StackOverflow programmatically

For production backend development: Claude wins. The code quality, architectural thinking, and debugging capabilities justify the identical $20/month cost. Your future self will thank you when maintaining code six months later. For rapid frontend prototyping: ChatGPT wins. Web browsing keeps you current with framework changes, and faster iteration helps ship MVPs quickly. What I actually use: I keep both subscriptions ($40/month total). Claude for backend systems, complex debugging, and architecture decisions. ChatGPT for frontend work, quick scripts, and researching new libraries. If you can only afford one: Start with Claude if you're building systems you'll maintain. Start with ChatGPT if you're learning or prototyping. After 2-3 months, evaluate if you need both.

Frequently Asked Questions from Developers

After 12 projects and 8,400+ lines of code, here's what matters for developers.

Claude produces better code for systems you'll maintain. The reasoning, architecture advice, and debugging capabilities make it superior for serious development work. The 200K context window handles real codebases instead of toy examples.

ChatGPT ships faster with current knowledge of frameworks and libraries. Web browsing keeps you updated on latest best practices. For MVPs, prototypes, and learning new technologies, ChatGPT's speed advantage matters.

My setup after 5 months: I pay for both ($40/month). Claude for backend Python/Django work, complex debugging, and system design discussions. ChatGPT for React components, quick scripts, and researching new packages. The combined cost is less than one billable developer hour.

If you're just starting: Use free tiers for 2 weeks to test both. Then subscribe to whichever fits your primary work. Claude if you build systems. ChatGPT if you prototype often. You'll probably add the second subscription within 3 months when you hit its limitations.

📚 Related Developer Tools & Comparisons

Testing Methodology: Both AI coding assistants used for 5 months (October 2025 - February 2026). Built 12 real projects including Django REST APIs, FastAPI services, React applications, web scrapers, and data pipelines. Total code written: 8,400+ lines across Python, JavaScript, and TypeScript. Each assistant used for alternating projects to ensure fair comparison. Code quality measured by refactoring needed, bugs found after 1 month, and maintenance difficulty. Pricing verified March 5, 2026 on official websites.

Affiliate Disclosure: This article contains affiliate links to both Claude and ChatGPT. We earn commission on purchases at no additional cost to you. All testing conducted with paid subscriptions purchased independently. Tool recommendations based solely on 5 months of real development work. Neither company sponsored or influenced this review.

Last Updated: March 5, 2026 | Next Review: June 2026 (quarterly feature and pricing verification) | Author: Paresh, DigitalsProductivity | Contact: feedback@digitalsproductivity.com | Development Investment: $300 total over 5 months (both subscriptions)